|

We're almost on the final stretch!!!

Update: I now have a Google Play Store Developer license! This means I can publish QTOO & all my future apps (ahhh!!) looks like it's time to get an Android phone.... so... we can look forward to being an actual published developer!! check out <link> if you’re interested in seeing what two year 1 IT students are capable of :-) More updates: I have linked a firebase to our app, so we have actual data storage capabilities. I am currently working on user account capabilities, which can be used to store information like contact email, a user’s frequent orders, etc. We’re looking to link up all the pages by tuesday, to be able to publish our app to the google play store & to do some testing. All in all- we’re stressed, but we continue to hope for the best. Sometime between sunday & now (wednesday) I found myself giving thought to how the assignment is apparently going to be graded. One of the components is how challenging our project was. We were happy, yes, that we were finally making discernible progress on our project. But, a web app? Markup & javascript? Is that even anywhere near the ballpark of sufficient? Well, I didn’t think so. 6 hours a week for 4 weeks put aside for us to work on this project & the best thing we could come up with was a web app? Deffffffinitely not enough.

So, we started talking. Because communication is key. With our friends, with lecturers, with classmates. And several people we talked to pointed us towards Adobe PhoneGap. Essentially, a software that could build a mobile app from a web app written in html/css & javascript. This (of course) blew our minds!! We’d never heard of such technologies before. We did some research, and found that PhoneGap wasn’t as novel of a technology as we originally thought. Other, similar, services were available with softwares like EVOthings studio, Ionic framework, and Apache Cordova. This realisation thus added to our project’s prospects. Cordova could bridge the gap between web and app for us. Without needing us to sacrifice an arm, a soul, our sleep, & our every waking second. With the knowledge that we could eventually still produce a mobile app recharged our engines (brains), & pushed us to keep working on the project. On the more technical side of things,,,,, I found a cordova plugin for working with iBeacons & was over the moon. Or, at least, until I started trying my hand at using the plugin. The documentation for the plugin was less than informative, the example app made available on GitHub didn’t work, & I didn’t have any actual iBeacons to work with. I spent a good day looking for iBeacon scanners that would give me enough information of iBeacons in my vicinity so i could use their uuid, major, & minor identifiers. No luck there. Different apps revealed different BLE devices, & as for all the information I did manage to scrape together- either the code taken right from the plugin's source repo on Github was wrong, or my shady iBeacon scanner was giving me false information. Beyond this, I even found that Estimote-one of the leading companies in the iBeacon market- had an app on the app store (available for iOS!) that allowed me to turn my iPhone into a virtual device. I'm pretty sure I actually exclaimed 'eureka' when I found this feature. I was over the moon that all the work I put into this iBeacon stint was actually paying off, & I was going to have a virtual iBeacon to test my app with, & everything was going to end happily ever after. ...yeah that's far from the actual story. I did some deeper digging & found that I could not modify the range of my virtual beacon. & that in itself was enough to ruin all my plans. Our entire app was centered around monitoring (a type of tracking) beacons, which tracks entry & exit into & from a range. Either way, I (not-very-quickly) realised that iBeacons were a dead end. I had to find another method for indoor geolocation. I needed it to be quick, accurate, & non-taxing on the device. After a short stint of googling, I (finally) stumbled upon the geolocation plugin for cordova. It makes use of javascript's geolocation API, & is significantly better documented than the aforementioned iBeacon plugin. I check out of week 14 with 'not all hope is lost'. What a week!

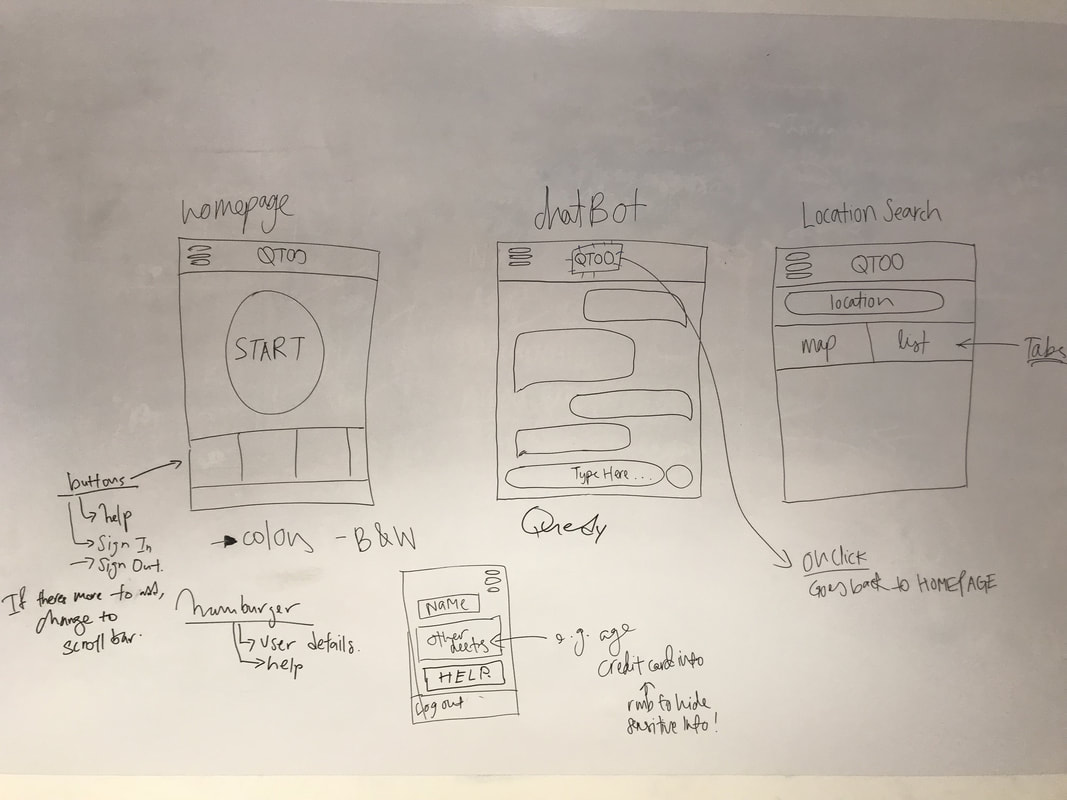

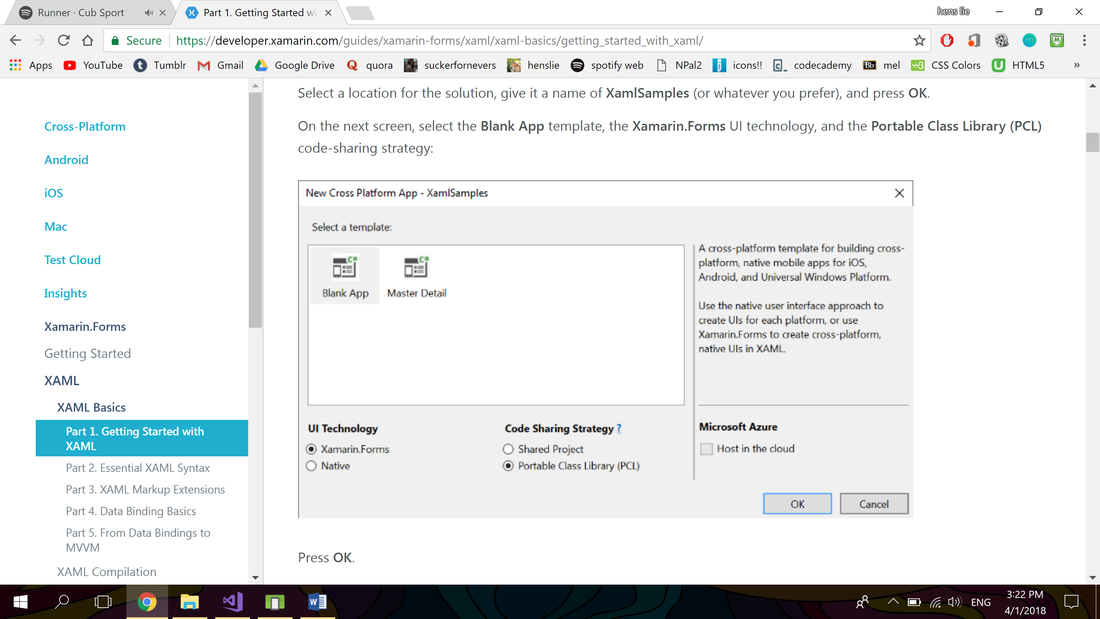

So I guess I’ll start with an overview of what happened. This week, we jumped (dived) right into the deep-end of mobile app development. I say this with these facts in mind: i. neither me nor liyun are macbook users, so navigation around the macbook itself brought with it a lot of new experiences & instances where we needed to look up how something really basic worked. ii. we were trying to use xamarin studio to create our app. in the beginning, xamarin studio sounded like the stuff of dreams. cross platform native apps using c#, a language we’re already familiar with? what we didn’t take into concern was the fact that xamarin forms apps’ UI is powered by XAML. When we first took real notice of this, we didn’t think much of it. I mean, XAML is just markup. Furthermore, both of us have decent background in HTML. However, we quickly found out how XAML is much stricter than HTML, & has a much gentler learning curve. We were going to have to put in significant hours just to learn a new markup language to get our app’s UI out. iii. each passing hour brought clearer knowledge of the fact that mobile app production is much more complex than we originally thought. & neither one of us had any idea where to start. Looking back on this, it’s quite clear that our original plan was beyond ambitious. It required us to pick up a full suite of skills in roughly a month’s time, while simultaneously going for class & keeping up with our 4 (my 5) ongoing assignments. To give some context, all these eye-opening realisations happened by wednesday’s portfolio class. So since thursday (or strictly wednesday evening), we’ve started diverting our effort into building, first, a web app. This decision was made in light of the fact that both liyun & I have considerably more skill in HTML/CSS + Javascript than in XAML & C#. Furthermore, one thing we noticed back when we were still trying to make xamarin studio work is that online resources for XAML & C# are lacking. Most of our searches’ top hit was a link to the respective language’s documentation. Now i’m not saying that documentation provides bad information. In fact, our problem was that the documentation was so comprehensive that each page we read would answer our queries by introducing new concepts. Now imagine if we had to do this for every single question we had. Talk about a 4-week wild goose chase. 4 days later, we have a simple UI that we could’ve only DREAMT of achieving with xamarin studio. Pictured above: UI diagrams for QTOO, and navigational behaviors. Update: we hit our first obstacle. Based on the feedback we got from Dialogue in the Dark, we understood how developing an iOS app would have a much greater market reach than an Android app. As such, we decided on following through on our goal of creating an iOS app. However, an Apple device is required to develop iOS apps, and neither me nor Li Yun, my partner, has one. So, right now we are in the midst of trying to procure an Apple device. With this in our way, we are continuing to trudge on, by doing more extensive research, and doing further reading. Regarding the technological aspects of QTOO, we are luckily able to work incrementally on its UI. This is because we are using Xamarin to build QTOO, which builds cross-platform apps. Pictured below: me doing research, finding out the different kinds of projects we can create with Xamarin, as well as how they each differ. With this knowledge, we are able to determine the best configuration to use for QTOO.

Another update: Just received word that the school does have MacBooks that can be rented to us, but because most of the newer MacBooks have been deployed for Open House (that just started today), I can only get the machine next week. So, stay tuned for more details regarding our (my) beef with Apple. In the meantime, we will be developing QTOO’s UI! Side note: we submitted a short proposal for QTOO to the Microsoft Imagine Cup yesterday. Best case scenario: we get through to the next round. Worst case scenario: we don’t hear back from them, and never speak of this ever again. On the more technical side of things, ya girl created the Visual Studio project for QTOO, navigated to MainPage.xaml and realised that I don’t know any XAML. So, I am embarking on another journey to pick that up. If you are interested in checking out some of the notes I have thus taken, click the "Read More" below. On 29 December 2017, Friday, I met up with a representative of Dialogue in the Dark to pitch our idea for Out of the Queue to him, to evaluate the app’s viability as well as to gain some feedback about our proposed features. I gained a significant amount of feedback from him that we will definitely be using in the development of QTOO.

Here are the notes I took down from the session: -Firstly, he noted that the concerns we voiced and considered in coming up with QTOO are very relevant. The difficulties we named are indeed relevant and are experienced by the visually impaired community. -Do sufficient research to ensure that there isn’t already a similar app on the market. If it already exists, we should come up with more features to add further value to our app. -Within the visually impaired community, majority of people use iPhones. This is because iOS provides far superior accessibility features than Android. Beyond the visually impaired, even the elderly has a general preference for iOS, for its ease-of-use. -In terms of whether they prefer giving audio or text input, they prefer being able to dictate. However, because QTOO is going to be used in noisy environments, he recommends giving users suggested phrases to select from rather than an empty text box. -As for the output format, I asked if they found significant difference between audio output and text. (which they would use a screen reader for) He told me that people just starting out with smartphones generally prefer audio output for its ease of use. However, as they grow more familiar, their preference leans to using a screen reader. This is because they can control the speed at which they receive information. -For our user interface, we should stick to a high-contrast color palette, with simple design. This is to aid the visually impaired with partial sight, to help them navigate the app. -To help with accessibility, we need to remember to label all our buttons well. Each label should be a short description of its function. -Our app should be designed with efficiency and effectiveness in mind. Features should be intuitive, and the app should be easy to use. -He also highlighted that it is important to have a detailed but concise help section where users can go to when they face any difficulties. -Being able to receive feedback to make subsequent improvements should be enabled in QTOO, so we can continually optimise our app for our users, to create the best possible experience. -During QTOO’s development, we should be placing a lot of focus on how QTOO can relieve stress. To guide them through new environments and provide assistance wherever possible, to make the concept of venturing into new environments and partaking in new experiences as smooth as possible. -Finally, I voiced my concern that QTOO would not be well received by the visually impaired community. This concern was raised when someone else brought to my attention how the visually impaired are creatures of habit and rarely venture out of their comfort zone. This concern was met with a reassurance that the visually impaired would be very receptive to QTOO. Currently, venturing into new environments is a very stressful situation for them and that’s why they don’t do it. However, if QTOO is able to guide them through and aid them in this process, they would be much more open to venturing into new places. Furthermore, he mentioned that the community at Dialogue at the Dark would be interested in helping us test out QTOO. So, we are looking at trying to get a prototype out soon so that we can get actual users to test our app and give us feedback for us to hopefully be able to make final brush ups before demo day. In conclusion, we have found out that we do have a very viable user base for QTOO, and that QTOO is addressing very relevant issues faced by the population in intuitive, tech-forward ways. To begin, this is a list of just some of the populations Out of the Queue aims to bring a positive impact to.

Target Demographic - Visually impaired - Hearing impaired - People with a sensory disorderr - People with anxiety - Dyslexic people - People with a learning disability - Tourists (those that don’t speak the local language, or are unfamiliar with the local customs/ culture) - People with autism - Impatient people Overview Appropriately titled 'Out of the Queue ' (Q2 for short), our app will become a necessity in your life before you even know it. We aim to develop a mobile app that facilitates communication of information in public. Examples of situations where Q2 will be used include- placing an order at a fast food restaurant, waiting for a queue number to be called in the bank, or even getting help locating an item in a store. Each client of the app (i.e. restaurants, banks, etc.) will provide information like their available services or menu to us & we will implement the information into the app. An example of implementation is- a restaurant provides us with their menu, along with optional descriptions for each item on the menu. Users can pose queries to a chatbot, which is able to audibly relate the relevant information to the user, or provide the needed guidance. Beyond just receiving information, users can choose to make orders & even payment through our app. Provision of this feature will mean that users don’t have to endure the stressful, ineffective process of making their order over the counter. Furthermore, for fast retailers in particular, our app will bring immense benefits. These include places like McDonalds, LiHo, Koi Cafe, & more, that use a queue number system where each customer is given a queue number upon ordering which is then displayed on a digital signboard when the order is ready, so the customer can collect their order at the counter. With our app, the retailer is able to send notifications straight to the user’s smartphone, which can produce either a beep tone, or a custom vibration. This reduces the confusion as well as anxiety that may come with the traditional ordering process. Above all of these features, our app aims to be capable of being seamlessly integrated into our users’ lives. Talking to the chatbot feels like second nature, & additional features bring ease, efficiency, & elegance to public communication. Having this entire suite of features & functions, our app effectively replaces the traditional queuing system. And this is our goal. To redefine communication, with Out of the Queue. |

|